Affinity Groups

| Logo | Name | Description | Tags | Join |

|---|---|---|---|---|

| Launch | Launch is a regional computational resource that supports researchers incorporating computational and data-enabled approaches in their scientific workflows at eleven under-resourced institutions in… | aidata-analysis | Login to join |

Announcements

Upcoming Events & Trainings

No events or trainings are currently scheduled.

Topics from Ask.CI

Loading topics from Ask.CI...

Knowledge Base Resources

| Title | Category | Tags | Skill Level |

|---|---|---|---|

| AI Institutes Cyberinfrastructure Documents: SAIL Meeting | Learning | ACCESS-accountaidata-analysis +1 more tags | Beginner, Intermediate, Advanced |

| An Introduction to the Julia Programming Language | Learning | aidata-analysismachine-learning +1 more tags | Beginner |

| Applications of Machine Learning in Engineering and Parameter Tuning Tutorial | Learning | data-analysismachine-learningpython | Beginner, Intermediate |

Engagements

Investigation of robustness of state of the art methods for anxiety detection in real-world conditions

University of Illinois at Urbana-Champaign

Status: Complete

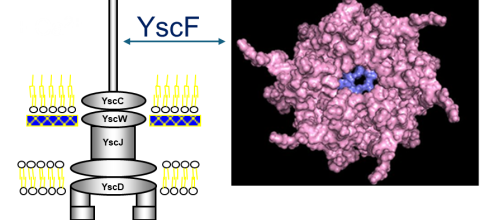

Prediction of Polymerization of the Yersinia Pestis Type III Secretion System

Nova Southeastern University

Status: Complete

Bayesian nonparametric ensemble air quality model predictions at high spatio-temporal daily nationwide 1 km grid cell

Columbia University

Status: Complete