Browse examples of current and complete engagements.

Re-engineering Lilly’s KisunlaTM into a novel antibody targeting IL13RA2 against GBM using AI-driven macromolecular modeling

Atrium Health Levine Cancer

Status: In Progress

Investigation of robustness of state of the art methods for anxiety detection in real-world conditions

University of Illinois at Urbana-Champaign

Status: Complete

Adapting a GEOspatial Agent-based model for Covid Transmission (GeoACT) for general use

University of California San Diego

Status: Complete

Exploring Small Metal Doped Magnesium Hydride Clusters for Hydrogen Storage Materials

Murray State University

Status: Complete

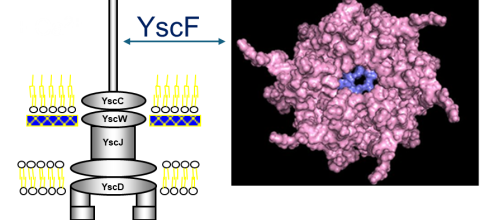

Prediction of Polymerization of the Yersinia Pestis Type III Secretion System

Nova Southeastern University

Status: Complete

CHE230120: Natural Bond Order Calculation of Fe(II) isonitrile complex using the ORCA computational suite

Drexel University

Status: Complete

Bayesian nonparametric ensemble air quality model predictions at high spatio-temporal daily nationwide 1 km grid cell

Columbia University

Status: Complete